|

Back to Blog

Webscraper skipping pagination5/20/2023

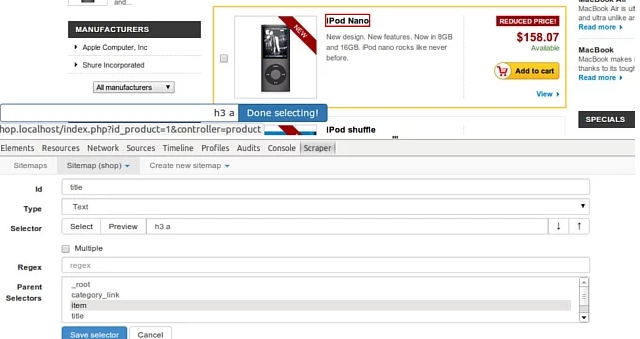

These instances will run in parallel and scrape the website simultaneously. You can accomplish this by introducing two GET parameters as below: Instead of having a script that iterates over all pages, you can modify the script to work on smaller chunks and then launch several instances of the script in parallel.Īll you have to do is pass some parameters to the script to define the boundaries of the chunk. It's possible by using HTTP GET parameters.Ĭonsider the paging example presented earlier. The idea behind this approach to parallel scraping is to make the scraping script ready to be run on multiple instances. Several libraries can support you, but the most effective solution to perform parallel scraping in PHP doesn't require any of them. Let's now dig into how to make your script faster! Parallel Scrapingĭealing with multi-threading in PHP is complex. You just learned how to avoid being blocked. CURLOPT_PROXYTYPE can take the following values: CURLPROXY_HTTP (default), CURLPROXY_SOCKS4, CURLPROXY_SOCKS5, CURLPROXY_SOCKS4A, or CURLPROXY_SOCKS5_HOSTNAME. With most web proxies, setting the URL of the proxy in the first line is enough. You can set a web proxy with cURL as follows: Find out more about how you can use them to avoid blocks. It makes it harder for each IP offered by the service to be banned, and even if that happened, you would get a new IP quickly. That means that the IP exposed by the proxy server will frequently change over time or with each request. On the other hand, paid proxy services are more reliable and generally come with IP rotation. However, you shouldn't rely on them for a production script. Several free proxies are available online, but most are short-lived, unreliable, and often unavailable. When performing requests through a proxy, the target website will see the IP address of the proxy server instead of yours. A web proxy is an intermediary server between your machine and the rest of the computers on the internet. One of the best ways to do it is through a proxy server. In other words, to prevent blocks on an IP, you must find a way to hide it. It'll be blocked if the same IP makes many requests in a short time. The primary check looks at the IP from which the requests come. Using Web Proxies to Hide Your IPĪnti-scraping systems tend to block users from visiting many pages in a short amount of time. Then, iterate through them to extract all the required URLs from the href attribute as follows:įind out about what ZenRows has to offer when it comes to setting custom headers. page-numbers a CSS selector to select all the pagination HTML elements on the page. In particular, you can use HtmlDomParser with the. Therefore, if you want to use a CSS selector to pick the elements in the DOM, you should use the CSS class along with other selectors. It's precisely what happens with page-numbers in the scrapeme.live page. Note that a CSS class doesn't uniquely identify an HTML element, and many nodes could have the same class. In the WebTools window, you can see that the page-numbers CSS class identifies the pagination HTML elements.

Selecting the "Inspect" option to open the DevTools windowĪt this point, the browser should open a DevTools window or section with the DOM element highlighted, as below: The DevTools Window after selecting a pagination number HTML element Right-click the pagination number HTML element and select the "Inspect" option. Let's now retrieve the list of all pagination links to crawl the entire website section. You can now use HtmlDomParser to browse the DOM of the HTML page and start the data extraction. You can add this to your project's dependencies with the following command: Then, you also require the following Composer library: If you don't have these installed on your systems, you can download them by following the links above. That is the list of prerequisites you need for the simple scraper to work: And then how to get around the most popular anti-scraping systems and learn more advanced techniques and concepts, such as parallel scraping and headless browsers.įollow this tutorial and become an expert in web scraping with PHP! Let's not waste more time and build our first scraper in PHP.

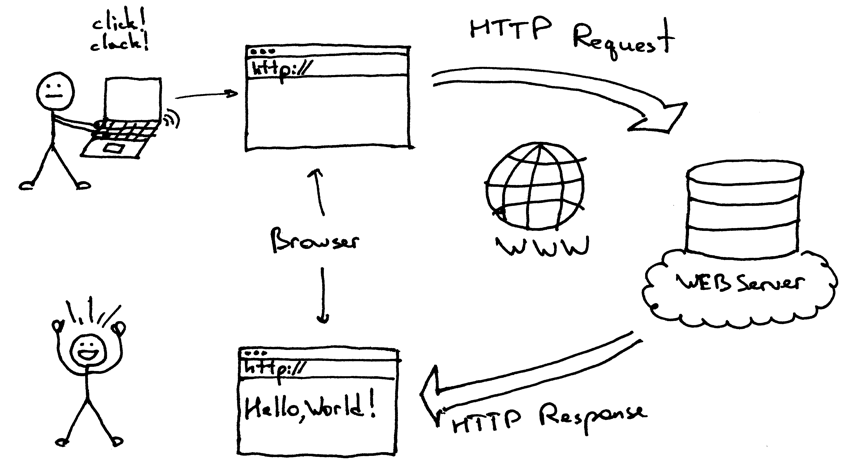

In this tutorial, you'll learn the basics of web scraping in PHP. Here, you'll learn how to build a web scraper in PHP using one of the most popular web scraping libraries. As a result, several libraries help you scrape data from a website. Web scraping has become increasingly popular and is now a trending topic in the IT community.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed